nine hundred

The pace of 5G investment and adoption is accelerating. According to the GSMA Mobile Economy 2023 report, between 2023 and 2030, nearly 1$4 trillion will be used for 5G capital expenditure. The radio access network (RAN) may account for more than 60% of the expenditure.

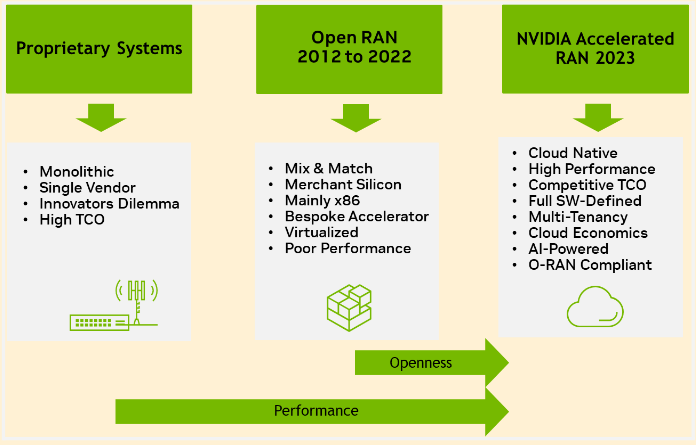

Capital expenditures are increasingly shifting from traditional proprietary hardware methods to virtualized RAN (vRAN) and open RAN architectures that can benefit from the cloud economy without the need for dedicated hardware. Despite these benefits, the adoption of open RAN is challenging as existing technologies have not yet provided the benefits of cloud economy and cannot provide both high performance and flexibility.

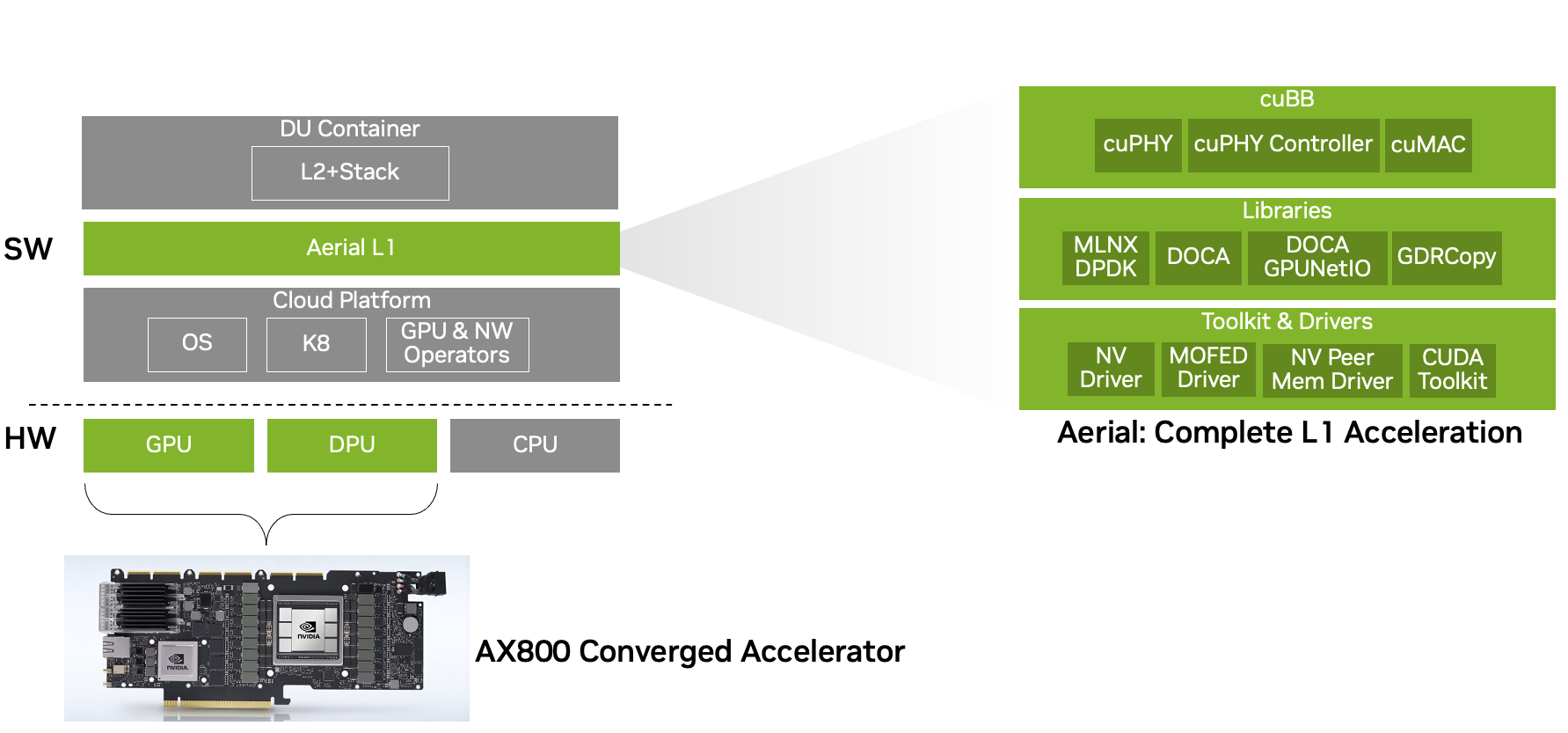

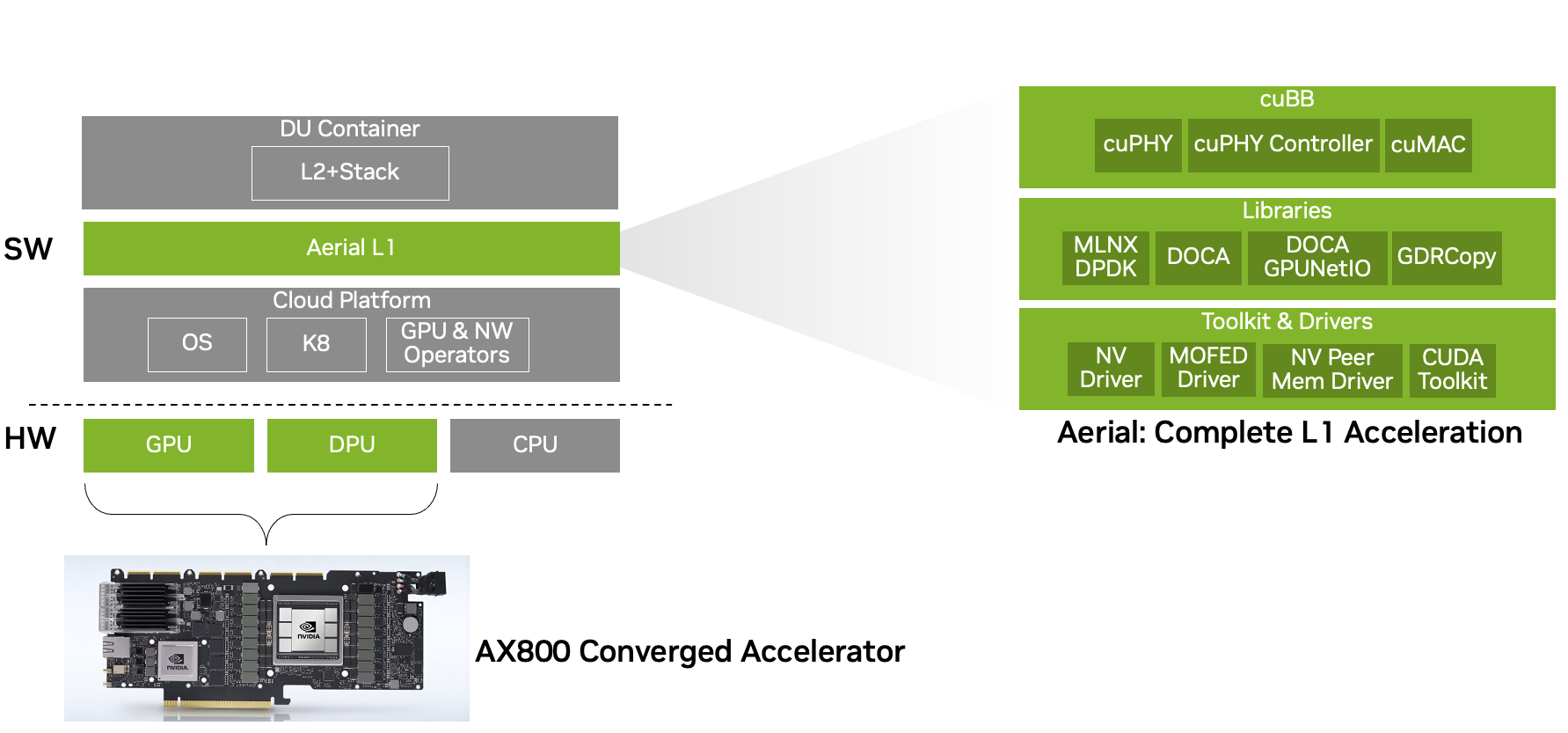

NVIDIA provides true cloud native and high-performance accelerated 5G solutions on commodity hardware that can run on any cloud through the NVIDIA AX800 fusion accelerator (Figure 1).

In order to benefit from the cloud economy, the future of RAN will be in the cloud (RAN in the cloud). The Road of Cloud Economy and Clayton&Middleot; Christensen's description of disruptive innovation in traditional industries is consistent in his book The Innovator's Dilemma: When new technologies lead to the failure of great companies, that is, with gradual improvement, new, seemingly inferior products can ultimately gain market share.

The existing open RAN solutions are currently unable to support non 5G workloads and still provide poor 5G performance. Most of them still use disposable hardware accelerators. This limits their appeal to telecom executives, as the relative performance of traditional solutions provides a proven deployment plan for 5G.

However, the NVIDIA Accelerated 5G RAN solution based on the NVIDIA AX800 has overcome these limitations and is currently providing performance comparable to traditional 5G solutions. This paves the way for deploying 5G open RAN on commercial off the shelf (COTS) hardware at the edge of any public cloud or telecommunications company< Br/>

Solutions that support cloud native RAN

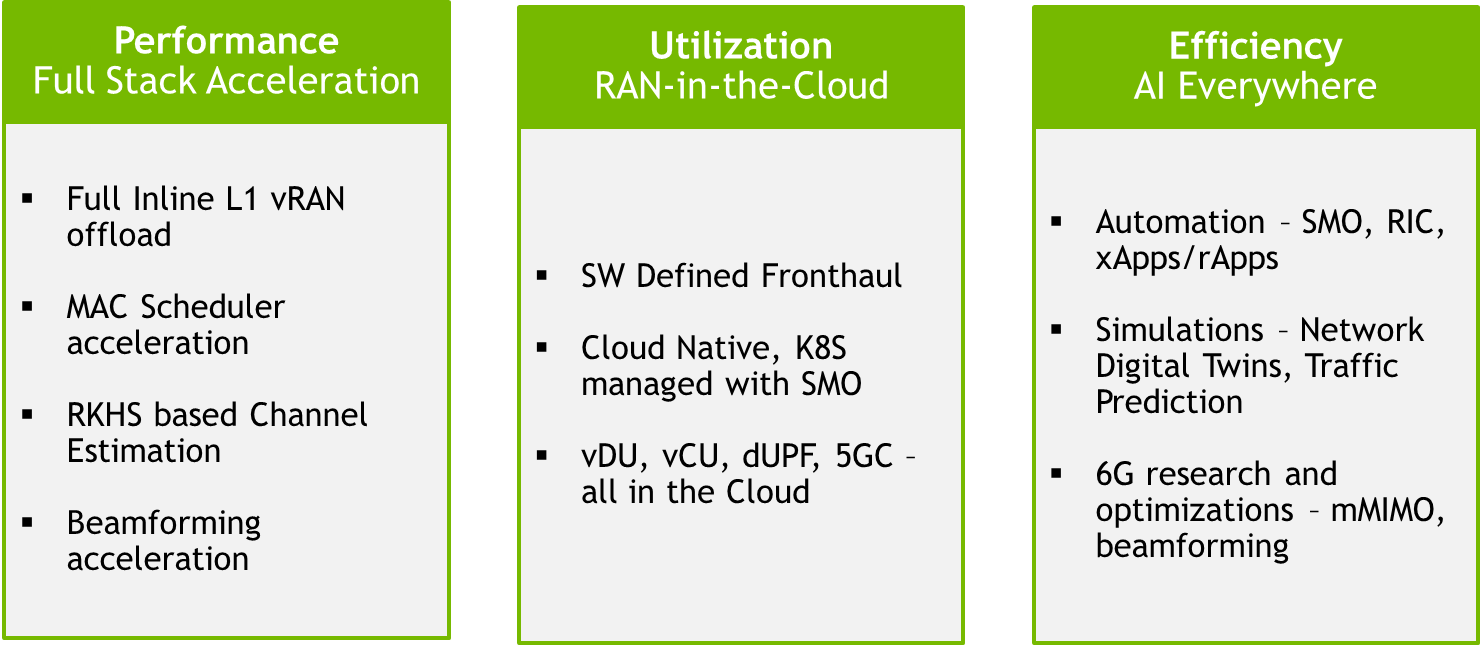

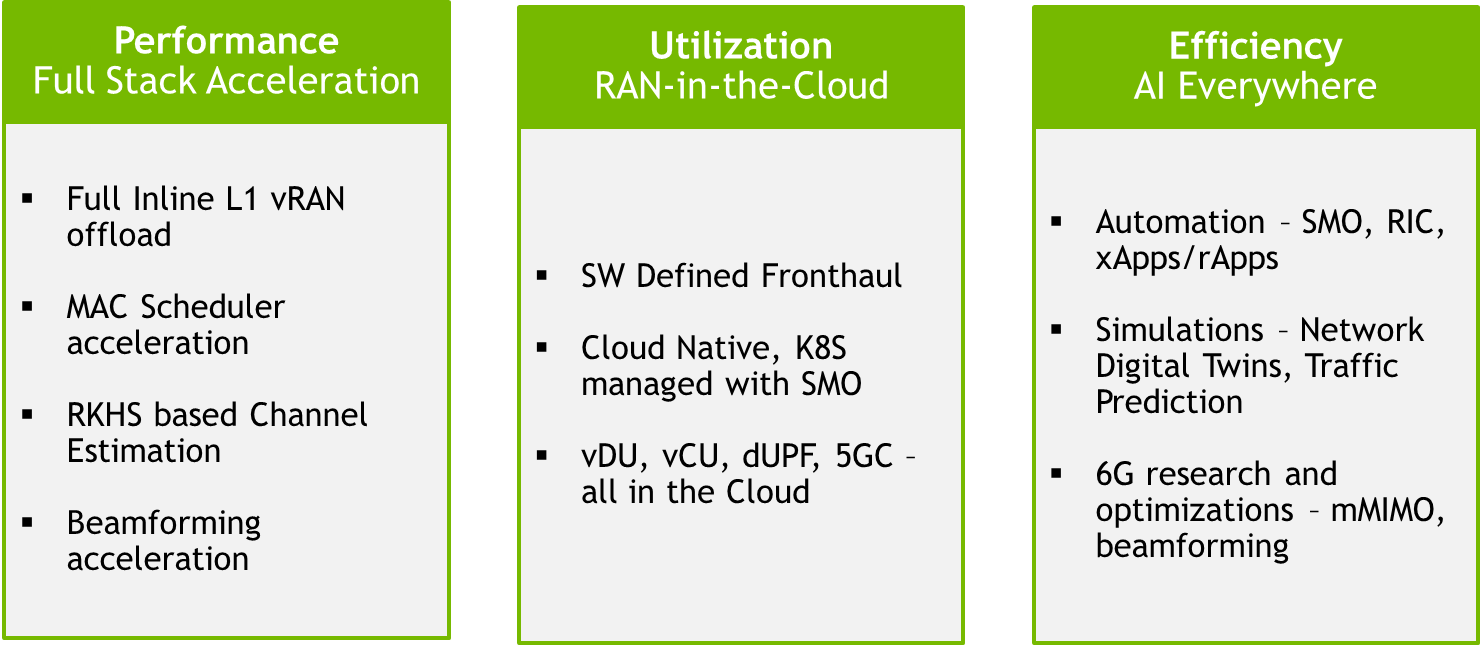

In order to promote the widespread adoption of cloud native RAN, the industry needs solutions that are cloud native, provide high RAN performance, and possess artificial intelligence capabilities.

Cloud native

This method provides better utilization, multi-purpose and multi leasing, lower TCO, and higher automation, with all the advantages of cloud computing and benefits from the cloud economy.

A cloud native network that benefits from the cloud economy requires a thorough reflection on methods to provide a network that is 100% software defined, deployed on common hardware, and can support multiple tenants. Therefore, this is not building customized and dedicated systems in public or telecom clouds managed by cloud service providers (CSPs).

High RAN performance

High RAN performance is required to provide new technologies, such as large-scale MIMO with improved Spectral efficiency, cell density and higher throughput, all of which have improved energy efficiency. It has been proven that achieving high performance comparable to specialized systems on commodity hardware is a daunting challenge. This is due to the demise of Moore's law and the relatively low performance of software running on CPUs.

Therefore, RAN suppliers are building fixed function accelerators to improve CPU performance. This approach leads to inflexible solutions and cannot meet the flexibility and openness expectations of Open RAN. In addition, using fixed features or disposable accelerators cannot achieve the benefits of cloud economy.

For example, a software defined 5G network based on the Open RAN specification and COTS hardware achieved a typical peak throughput of approximately 10 Gbps, while the peak throughput performance of 5G networks> 30 Gbps, these networks are built using custom software and hardware using traditional vertically integrated device methods.

According to a recent survey of 52 telecom company executives, Telecom Networks: Tracking the Coming xRAN Revolution; In terms of the obstacles to xRAN deployment, compared to traditional RANs, 62% of operators are concerned about today's xRAN performance& Rdquo;

Artificial intelligence capabilities

The solution must evolve from current applications based on telecom network proprietary implementations to infrastructure with artificial intelligence capabilities that hosts internal and external applications. AI plays a role in 5G (AI for-5G) to achieve automation and improve system performance. Similarly, artificial intelligence works together with 5G (AI on 5G) to achieve new functions in 5G and other fields.

Achieving these goals requires providing a new architectural approach for cloud native RAN, especially using a universal accelerated computing platform based on COTS. This is the focus of NVIDIA, as shown in Figure 2.

The focus is on delivering universal COTS servers built using NVIDIA aggregation accelerators (such as NVIDIA AX800) that can support high-performance 5G and AI workloads on the same platform. This will provide cloud economy with better utilization and lower TCO, as well as a platform that can effectively run artificial intelligence workloads, providing a future tested RAN for the 6G era< Br/>

&Nbsp;

Running 5G and AI workloads on the same accelerator using NVIDIA AX800

The NVIDIA AX800 fusion accelerator has changed the game rules for CSP and telecommunications companies as it brings the cloud economy into the operation and management of telecommunications networks. The AX800 supports multi-purpose and multi leasing of 5G and AI workloads on commodity hardware by dynamically expanding workloads, which can run on any cloud. By doing so, it enables CSP and telecom companies to use the same infrastructure in 5G and AI, with high utilization rates.

Dynamic expansion of multiple tenants

The NVIDIA AX800 achieves dynamic scaling at the data center, server, and card levels, supporting 5G and AI workloads. ?794Gbps DL and UL throughput.

The NVIDIA solution also has high scalability and can support configurations from 2T2R (macro deployments below 1 GHz) to 64T64R (large-scale MIMO deployments). Large scale MIMO workloads with high-level counting are mainly determined by the computational complexity of algorithms used to estimate and respond to channel conditions, such as detection reference signal channel estimator, channel equalizer, beamforming, etc

GPUs, especially the AX800 (with the highest streaming multiprocessor count of NVIDIA Ampere architecture GPUs), provide an ideal solution to address the complexity of large-scale MIMO workloads in the medium power range< Br/>

nine hundred and six